https://dl.acm.org/doi/pdf/10.1145/3656404

I saw this academic paper on the Internet and thought it might be worth a read for those of us interested in adding optimizations to the Zig compiler pipeline.

https://dl.acm.org/doi/pdf/10.1145/3656404

I saw this academic paper on the Internet and thought it might be worth a read for those of us interested in adding optimizations to the Zig compiler pipeline.

Reminded me of this lobsters comment: Divorce from LLVM | Lobsters

The observation that, for the following code, both gcc and clang produce different machine code for f and g:

extern void eff();

int f(int x, int y) {

int r = x + y;

eff();

return r;

}

int g(int x, int y) {

eff();

int r = x + y;

return r;

}

This is a very deep design bug — compilers shouldn’t be able to tell the difference between f and g.

The comment you link to raises an important point:

It is not per se a problem that the code for g is suboptimal—getting that right involves annoying cost models that may not generalise from a three-line function.

This is less about LLVM than it is about C, especially the combination of the blind cliff of compilation units and pervasive aliasing. Of course that doesn’t affect the program you’re showing, the rules say they’re the same program. But it does affect what optimizations are even worth pursuing in the first place. A trivial function like that would no doubt get inlined, and what the optimizer does from there is a separate question.

But just as a heuristic, extern function calls as execution barriers is a critical one. It isn’t always true, but it’s expensive to disprove.

So I see the design flaw as primarily belonging to C. I would expect a ground-up new compiler to make the same “mistake” on an isolated compilation unit like this, and fix it at the call site after inlining, something I expect both GCC and LLVM would already do.

I don’t think C semantics is necessary relevant for this example? There are no compilation units in the picture, and there are no aliasing questions (C semantics is tight enough to guarantee that x, y, and r are not aliased, and can’t be mutated by eff)

This all have to do with the fact that LLVM IR preserves the “fact” that int r = x + y goes before “eff()” in the source order, although that is irrelevant for the task at hand (and, as the observable difference in the generated assembly demonstrates, actively harms optimization pipeline).

That’s what I was referring to here:

The question is how often will it be worth canonicalizing this? How much cycle time is is worth spending to prove that it’s ok?

There is definitely more than one compilation unit involved, let’s look at the original:

eff() isn’t defined, so it has to come from somewhere else. That’s a second compilation unit, and it severely restricts how well the compiler can reason about the effects of calling it.

This isn’t a trivial canonicalization at all, though. In many programs, but not this one, the blind function call can’t be proven not to affect the course of action. That kind of reasoning belongs at a later point in the pipeline, it isn’t at all like turning a - b into a + (-b). Order is something the compiler needs to know about in general, if only so it can deduce cases where rearrangement is legal.

A simple rule like “any blind function call is represented as an execution barrier” can be reversed later in the pipeline, but it’s too expensive to bother with during canonicalization.

In other words, an annoying cost model which may not generalize from a three-line function.

But that’s the thing, you don’t need to canonicalize this! With the right IR, the two version would be unrepresentable.

The bug here is not that LLVM fails to see that there’s an equivalence. The bug is that LLVM IR allows expressing this difference at all. The two programs should have been equivalent already by the time LLVM seems them at all.

Right now, the contract between frontend and the backend is that the front emits a sequence of instructions. The right contract is for the front-end to emit a set of instructions, and a set of (data or side-effect) edges between instructions.

I think we don’t have to go so far as to think about contracts and C semantics. It looks to me like a very simple pointer escape analysis. x and y are both stack variables, and no pointer has been taken. As per the C rules, it’s forbidden to mutate them through code you’re not seeing, including external function calls. Curiously, applying the const keyword to the arguments doesn’t change the generated code. This would be an extra hint to the compiler that the variables cannot be changed and that the optimization can be applied, as it is illegal to modify variables declared as const (not pointers). So it looks like the compiler just completely gives up on any optimization across external function calls. I don’t think we need to change C semantics, as it already has everything needed to generate optimal code. Nor do we need to review the contracts between front-end and back-end. We just need the compilers to at least try to optimize across external function calls.

Thank you, this is all that I’m saying here. There are good reasons why compilers do this, which don’t show up in three-line test functions, which will almost certainly be inlined if the compiler can do so.

Inlining would mean that the compiler can throw away all the register juggling, because the function barrier is no longer in place. When that happens, the difference in code between f and g won’t exist, because the compiler can arrange for x and y to live in registers which aren’t affected by the function call.

I do want to note that adding extern to f and g, which should be a hint that they might not be inline-able later, does not cause the rearrangement to happen. So it’s not in fact the compiler being smart, it’s missing a trick.

The problem being, when that is worthwhile, which it isn’t in the example, it’s frequently not possible. For a language like Zig, where being object-blind is the exception, and whole-program compilation is the rule, it’s worth spending more time figuring out what functions ‘mean’.

If I’m interpreting you correctly, you’re saying that with the right IR, the two versions would be represented identically. This is an interesting proposition worthy of careful consideration. You might be right.

But I think we both agree that an IR needs to be able to represent execution order. Say, if x and y were pointers, then we really couldn’t know if eff() mutates those addresses, so we would need to represent that case. So I don’t think this is about the IR, but rather, what IR is generated, and also, what IR can be generated.

What you’re proposing, I believe, is that there be a specific IR representation for order-independent execution. Which I do think is interesting, but my initial take is that it wouldn’t help much, because:

The thing is, they aren’t equivalent. One of them requires some register juggling to set up the function call, and one of them doesn’t. So even if it’s represented as order-independent, the compiler still needs to weigh the two orders, and pick the cheaper one.

That’s data flow analysis, and it happens on the IR, not during IR generation. The compiler needs to do the same thing: reason about which order is cheaper, and determine whether a reordering is even valid. Those two tasks belong together, for various reasons.

It’s possible, which I believe to be your suggestion, to move some of the order analysis into an earlier phase of the compiler, but I don’t think that would make it cheaper. In fact, I don’t see how it could make it cheaper. The completed control flow graph contains all the information required. In fact, constructing the complete control flow graph is how, in general, order independence is determined.

When you and I eyeball the code, and say “wait, these two things are order independent”, we’re partitioning the control flow graph. Canonicalizing subtraction out of existence reduces the amount of analysis required, but having an IR which can represent order-independent control flow means the IR generator is creating a control flow graph, which data flow analysis needs to do anyway. But if the IR generator does it, it throws away everything but the IR, so some amount of work would have to be repeated.

In short, there’s a stage which needs to happen, which takes ordered code, decides what the necessary orderings inside that ordered code are, and moves things around when it makes for a more optimal program. That has to start with ordered code, because program order is real if you’re not writing Haskell. Since that’s a requirement, the cheapest order to hand off to control flow and data analysis, is the order in which the program is written.

The simplest explanation of the “f and g” problem is that the compilers don’t have separate heuristics for tiny toy programs. In real life, a three-liner gets inlined.

It would be an interesting (but very time expensive) experiment to add a compiler pass which fixes the “f and g” problem to LLVM, and see if it has any effect on the benchmarks. My guess is no.

No, I don’t think so. An IR doesn’t need to be able to represent execution order, because execution order doesn’t exist. When instructions are executed by the CPU, they are not executed in any particular order — they are executed concurrently, and may even get executed and then un-executed due to speculation. The CPU guarantees that it’ll observe all dependencies, but it doesn’t care about order as a whole. “Order” is just not a useful concept when modeling computation. (*)

What an IR needs to be able to do is to express dependencies. And that’s the design bug — many IRs don’t have good support for expressing dependency directly, so they resort to using order for encoding dependency information. But this is an overspecification — it encodes irrelevant information, which then befuddles actual optimizations.

(*) there’s an annoying fact that machine code does have an order, but that’s another design bug.

Also, I must say that I have no idea what I am talking about, and you should instead listen to people who actually do compiler backends, rather than to some random front-end developer ![]()

Compilers do reduce programs to dependencies, when they construct the control flow graph, and do data flow analysis on it.

In order for the IR to be a pure expression of control flow, with separation of dependent and independent pathways, the IR generator itself would have to be the part of the compiler which constructs the control flow graph, and does data flow analysis. Since it’s generating IR, it would have to do this directly, from the AST.

It turns out that this step is easier done using the IR, hence, the IR generator doesn’t do that step. It happens elsewhere. But it does happen.

For the record, I’ve written precisely one compiler, and it compiles PEG patterns into VM code, so, not especially demanding stuff (and the optimization pass, which absolutely needs to do dependency analysis, happens after the “intermediate representation” is created). I mostly cribbed off Roberto Ierusalimschy, although I did find a couple of additional optimizations in the process (and am working on a pretty big one, not to stray off the topic too far).

But that’s one more than none!

The nodes in the CFG are basic blocks – linear sequences of multiple instructions, and its the basic blocks that contain redundant sequencing information. You can restrict basic blocks to be a single instruction long, and that is in fact exactly how one gets a sea of nodes: Sea of Nodes

That’s an interesting read! And it’s reminding me that, while I downloaded the original paper to my tablet, I haven’t read it yet, so we’ve had a bit of topic drift here.

We may just be circling around a terminology distinction. Everything between the parsed source code is IR, Zig has two (ZIR and AIR), something I’ve been meaning to investigate.

I think of IR generation, specifically, as a pass which creates a convenient form for the optimizer, and limits itself to very cheap and local optimizations and transformations. Maybe a bit of constant folding, some canonicalizations, anything which spares the optimizer some work. These days it usually does SSA as well.

But everything after that also uses intermediate representations, more or less by definition, up to the point where machine code is emitted. That includes all the DFA passes and so on, clearly.

So what happens in the “front end” vs the “back end” can get pretty murky.

I think the optimizations discussed here would scale really well to functions of any size. When we use the const keyword on the arguments, just tell the compiler “apply all the optimizations that you are used to, even in the presence of external functions”. And even in the absence of const, we can just say “if nobody has taken a pointer to a stack variable, apply all the optimizations that you are used to, even in the presence of external function calls”. In other words, don’t stop const optimizations or pointer escape analysis just because you encountered an external function call.

They can! And they do.

We have a puzzle here. Two functions, anyone can see that they’re identical in effect according to the abstract C machine. One of them is compiled suboptimally. Why?

Let’s eliminate some answers. It can’t be “the compiler authors don’t know it’s possible”. Folks who work on LLVM know the C standard better than me, you, or @matklad, even better than @andrewrk as a collective body (I’d bet on Andrew one on one!).

It also can’t be “the compiler doesn’t have a mechanism for rearranging code to make it more efficient”. That dog just won’t hunt, that’s most of what optimization does.

As an aside, I 100% believe that GCC and LLVM can both be improved upon for system code compiling. Maybe not for C code though, but even then, those compilers are beholden to some legacy choices which are leaving some efficiency on the table.

But this isn’t a low-hanging fruit thing. It isn’t that GCC and clang can’t rearrange g to avoid the register spill, it’s that they don’t. Why?

My answer, which is just a guess, is simple: the function was tagged as “always inline this”. It’s so simple than even when pulled out of object code, it’s amenable to link-time optimization. The heuristic skips control flow analysis entirely, because the function just exists as a skeleton to be used to emit other functions.

The fact that it’s suboptimal is exactly because calling it is not optimal to begin with. There’s never a reason to pay the function calling cost on something this simple, so the compiler doesn’t bother with it until it’s used somewhere.

I have some evidence of this! Watch what happens when we call g inside main:

See why g wasn’t optimized? There’s no g left!

My trick is to not actually care about standards compliance. This allows me to store more information in my brain because I don’t waste memory storing stupid things. Only things that can be derived from first principles are allowed in there, which makes it highly compressible.

I will gleefully ignore a standard if it moves the industry forward to a better de facto standard.

Everyone does this to some degree. For example, it’s undefined behavior for C source files to not end in a newline. Everyone ignores this part of the standard because it’s ridiculous. I think the standard got changed in response to this at some point.

And you can bet your ass if I was making a new browser, I wouldn’t implement DRM, while also trying to make my browser so popular that Netflix couldn’t ignore it.

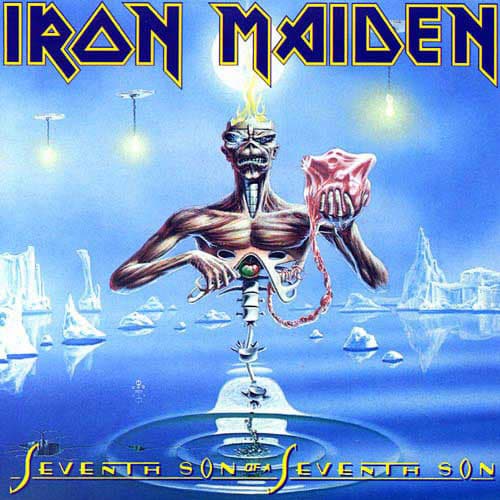

My favorite soundtrack while ignoring standards:

![]()

I am obviously listening to Moonchild (from

)

This is a good example of knowing the C standard better than I do ![]()

I’ve been working on some diffing stuff and discovered that the effect of applying a patch is undefined if the file being patched doesn’t end in a newline. I can’t help but think these things are related.

More seriously, I don’t think that what’s happening here. If look at the output, it includes the symbols for f, g and main. Someone can link this code and run it. To mark this symbol private, you’d want to say static int f. What happnes in main is that the arguments are also statically known, so the compiler is able to more thoroughly partially evaluate the thing.

When looking at godbolt, you actually want to do the opposite of this: don’t write main, write a non-static function — that way, it’s easier to isolate the interesting questions from effects like “compiler know Gauss trick”. So, for Zig you want to do something like

extern fn eff() void;

export fn f(x: i32, y: i32) i32 {

const r = x + y;

eff();

return r;

}

export fn g(x: i32, y: i32) i32 {

eff();

const r = x + y;

return r;

}

So, no, it is indeed the case that LLVM optimizes the two functions differently. And, again, the main thrust here is not that one version is worse than the other. The main thrust is that they are different.

If both version were bad, but identical, the fix would have been some local compiler optimization.

But the “different” output means that some more drastic changes required, including re-architecture the entire IR. Which is exactly the thing you might do as well if you are rewriting the backend anyway.

Yes, that’s true, but both GCC and LLVM perform link time optimization now. LLVM prefers to do this on bitcode, but can do it on object code as well.

A simple function like this can easily be inlined, even in pure machine code form. And it would be, because it does so little that it would be a waste to generate all the code necessary to make sure that x and y are where they have to be to satisfy the ABI.

That’s why it doesn’t matter that g does some juggling about: when it gets inlined, the code will be rewritten to use x and y in whatever registers are convenient, where “convenient” gets to include the things g does to get them out of the way to call eff().

So this is what I did, here’s eff.c

extern void eff() {

int goodbye = 4 + 5;

}

Here’s lib.c:

extern void eff();

int f(int x, int y) {

int r = x + y;

eff();

return r;

}

extern int g(int x, int y) {

eff();

int r = x + y;

return r;

}

And here’s main.c:

#include <stdio.h>

extern int g(int, int);

int h(int x, int y, int z) {

int m = 2 * x + z;

return g(m, y) + m;

}

int main() {

printf("%d", h(34, 5, 3));

}

Then run these:

clang -flto=thin -O2 eff.c lib.c main.c -o out

objdump -d -M intel out > objdump.s

What you get will be platform specific, here’s mine:

out2: file format mach-o arm64

Disassembly of section __TEXT,__text:

0000000100003f64 <_eff>:

100003f64: c0 03 5f d6 ret

0000000100003f68 <_g>:

100003f68: 20 00 00 0b add w0, w1, w0

100003f6c: c0 03 5f d6 ret

0000000100003f70 <_main>:

100003f70: ff 83 00 d1 sub sp, sp, #32

100003f74: fd 7b 01 a9 stp x29, x30, [sp, #16]

100003f78: fd 43 00 91 add x29, sp, #16

100003f7c: 68 12 80 52 mov w8, #147

100003f80: e8 03 00 f9 str x8, [sp]

100003f84: 40 01 00 10 adr x0, #40

100003f88: 1f 20 03 d5 nop

100003f8c: 05 00 00 94 bl 0x100003fa0 <_printf+0x100003fa0>

100003f90: 00 00 80 52 mov w0, #0

100003f94: fd 7b 41 a9 ldp x29, x30, [sp, #16]

100003f98: ff 83 00 91 add sp, sp, #32

100003f9c: c0 03 5f d6 ret

Disassembly of section __TEXT,__stubs:

0000000100003fa0 <__stubs>:

100003fa0: 10 00 00 b0 adrp x16, 0x100004000 <__stubs+0x4>

100003fa4: 10 02 40 f9 ldr x16, [x16]

100003fa8: 00 02 1f d6 br x16

So, yes, it does in fact inline everything.