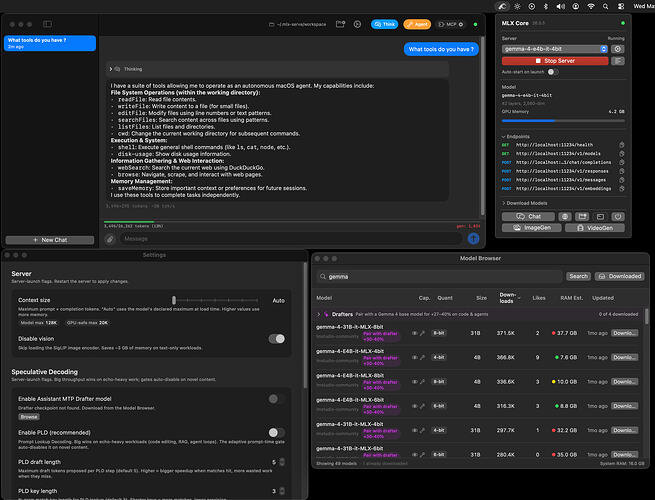

Hey all, built a native zig mlx ai inference engine for MacOS & Apple Silicon.

Zig is slightly faster than Python obviously, however most AI inference is memory is the bottleneck, so also added some speculative decoding to increase speed, depending on workload types, more charts, documentation and full sourcecode available at my github repo. [ https://github.com/ddalcu/mlx-serve/

Dev branch: working on adding DeepSeek V4 Flash support, which is proving to be difficult, but made really good progress. Had to quantize it down to 2bit so it can fit on my 128gb Mac.

Main Branch: Supports most MLX models, Qwen 3.6, Gemma, MoE, MTP/Assist models, Dense models, etc.

UI: Swift native code that launches the Zig server, to manage model downloads, chat UI, TaskTray, Launch coding agents if you have those installed, and has a optional built in Agent also + MCP.