9 posts were split to a new topic: Thoughts on AI Coding

I’ve refactored the coroutine code to be completely standalone, not depending on anything else inside zio, in case anybody wants to use it. Could even turn it into a library on its own. Also added RISC V support. And had a little fun implementing tiny channel for ping-pong test. Stack reservation on virtual memory will be a part of this standalone lib soon.

This took a bit of refactoring, to satisfy the way std.Io wants to do closures, but I have the first version of async/concurrent/await/cancel working. Now that I have this done, I/O operations should go much quicker. The zig-0.16 branch has a long way to go though, as std.posix is being removed and the code in libxev depends on it.

I spun off this project, my effort to replace libxev in zio. There are many reasons for which I’d prefer something slightly different from libxev: consistency of cancelations, immediate timer changes on all backends, wasted stack space, better handling of vectord reads/writes. What got me working on this is actually Zig 0.16 migration, as std.posix is being dismantled, it would be very difficult to upgrade libxev, so I decided to take it a step further and completely replace it, while making the new design a better fit for zio. It supports Linux, Windows, macOS, FreeBSD and NetBSD. Currently missing IOCP backend for Windows, but I don’t think that’s a huge problem, as I expect Windows will be used only as platform for development, not deployment. I’m also planning to make it multi-threading aware, to help with thread pinning in the zio runtime, because that depends on backend specifics.

This is the project:

The code it needs you to write is very verbose, it should not be used directly:

Extracted another library from the code. It’s beneficial to have more extensive CI testing here, so I moved it out of zio. Could be also used by someone else if they want to experiment.

To add, apparently glibc has getaddrinfo_a.

FreeBSD has the getdns library, even if that’s not part of their libc.

OpenBSD has getaddrinfo_async, but it seems like andrewrk has already found it since searching openbsd getaddrinfo_async shows an issue in Zig about it as top result on my end.

Windows has DnsQueryEx.

Big news, we now have growable stacks, they start at 64KiB and can grow up to 8MiB (both configurable). I had problems with Windows previously, because std.os.windows heavily wastes stack, so I always allocated 2MiB there. With this change, I don’t need to worry about it, it starts at 64KiB everywhere and grows as needed. Uses SIGSEGV/SIGBUS signal handler on POSIX platforms, and the automatic system for growing stacks on Windows. I’m super happy with this. Another benefit is that, instead of plain crashing on stack overflow, there is now an informative message before it aborts. And the stacks are registered with Valgrind, which makes debugging memory issues much easier. With this change, people basically don’t need to worry about stack size.

“640k ought to be enough for anybody”,

just needless to quote ![]()

I replaced libxev with my custom event loop over the last weeks. It was a nice experience and I realized that some of the libxev backends were a bit hacky or less optimized than they could be. This rewrite makes the code easier to maintain, plus I added support for more BSDs. It will also make it easier to use the native multi-threading characteristics of each backend. The biggest outliner is IOCP which basically requires it to be used as a multi-threaded load balancer, while most of the other backends prefer/need one instance per thread. So I’m reworking how coroutine migrations across threads work to accomodate this.

stupid me!!!

libanl launches a thread for some (short) period of time

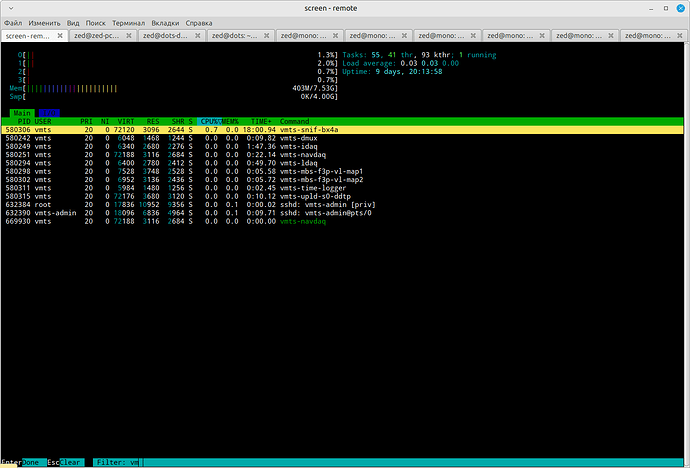

I noticed this when observing a behaviour of some of my progs with `htop`, thanx to the author!.

note that green-color-marked process name, it’s a thread, launched by `vmts-navdaq`, which one is in 4-th (starting from 1) line.

Have you figured out a nice way to do DNS resolution when linking libc?

so, yes, the only way to do “DNS-resolution“ truly concurrent with other process activities is to implement the protocol by hand (MEM/CPU vs I/O, remember?), and here you have 2 ways:

- EDSM

- coroutines

which one is better, I really do not know (yet)

I’ve just released version 0.5.0:

This is a major release with many changes. It has been in development for a while, but I finally decided to release it.

First of all, the codebase has been relicensed under the MIT license.

I replaced libxev with a custom I/O event loop, that has better cross-platform support,

natively supports multiple threads each running its own event loop, supports more filesystem operations, consistent timer behavior across platforms, grouped operations, and more. This is avialable in zio.ev and can be also used separately from the rest of the library. This switch was motivated by Zig 0.16, which removed a lot of lower-level I/O APIs, so it was hard to upgrade libxev, but in the end, I’m glad I did it. The new event loop is more feature complete, more efficient, and more flexible.

The coroutine library has also been restructured, and it’s now available in zio.coro.

I’ve added support for riscv64 and loongarch64 CPUs. Stack allocation has been completel rewritten, it now properly allocates vitual memory from the operating system, marks guard pages and we also have signal handlers for growing the virtual memory reservation on demand. Coroutines now start with 64KiB of stack space, and grow dynamically as needed.

The zio.select() function has been completely rewritten, and now has comptime-based support for waiting on things other than tasks. For example, you can use it to race two channel reads, or add timeout support to any operation that doesn’t handle timeouts natively.

There is now zio.AutoCancel for automatically cancelling the current task after a timeout.

This is useful when you want to call an arbitrary function that may take a long time to complete,

and you want to make sure it gets cancelled if it doesn’t complete in a timely manner, for example, in HTTP request handlers.

Many networking APIs now have direct timeout support. Additionally, in zio.net.Stream.Reader and zio.net.Stream.Writer, you can call setTimeout() and it will make sure the underlaying

std.Io.Reader or std.Io.Writer doesn’t block for too long. This is similar to

POSIX socket read/write timeouts, but also supports absolute deadlines.

Many new APIs have been added, for compatibility with the future std.Io API.

Internally, I’ve done a lot of refactoring to prepare for a future scheduler replacement.

I’ve started with project with an event-loop-per-thread model, and I still think it’s the better

approach for servers, but I’m slowly migrating to a hybrid model, where tasks primarily stick to

the thread they were created on, but also can be freely moved to other threads,

when it’s beneficial for load balancing.

The ecosystem is now becoming more complete and basically usable for me to stop yak-shaving and start working on my original application ![]()

Libraries that can utilize the asynchronous I/O from zio:

- HTTP client and server - GitHub - lalinsky/dusty: HTTP client/server library for Zig

- PostgreSQL client - GitHub - lalinsky/pg.zig: Native PostgreSQL driver / client for Zig (async fork)

- NATS client - GitHub - lalinsky/nats.zig: A Zig client library for NATS

I also had another idea, that I might try to implement when I have some time to experiment. Basically in zio, I already have coroutine context switching, scheduler, timers, mutexes, condition variables, all in user-space. That’s very very similar to what you need to implement a mini “OS” for embedded systems. If I add ARM Cortex-M assembly switching, optimize some memory use, and use MicroZig to integrate with some popular boards, I could have an alternative to FreeRTOS for some specific use cases, even with free std.Io implementation as a bonus.

As a stepping stone to the embedded world, I implemented 32-bit ARM support and it works just fine on Linux, so it’s usable on some of the older Raspberry Pis, for example. I’m really curious to see this work on an ARM microcontroller, will have to get some Pico W to experiment with. ![]()

Oh, that sounds fun. I’ve been toying with the idea of writing an RTOS in Zig along very similar lines for a while now. I have a couple of Picos (non-W unfortunately) lying around that I’ve been looking for an excuse to use for something.

Some shower thoughts on 32-bit support:

-

I’d suggest maybe reducing the max stack size on 32-bit Linux.

mmapaligns to a 4 kilobyte boundary, so your virtual address space is going to get fragmented pretty quickly if you’re reserving 8MiB stacks and you’ll get OOM errors way before you actually run out of virtual address space. -

On linux aligning the reservations is possible by doing an

mmap(2 * max_stack_size)followed by a couple ofmunmapcalls on either side of the allocation to trim it tomax_stack_size. I don’t think there’s a nicer way. This should reduce fragmentation, but I guess it also depends on the allocation patterns. On FreeBSD and NetBSD you can useMAP_ALIGNEDfor this, which is a bit more elegant the the brute force trick above.However, it will in turn make it more likely that you’ll run into an OOM error due to the larger request. I would only suggest doing this only in combination with reducing the max stack size

Yeah, the stack configuration will need to change, but that’s already user configurable, so I’m not too concerned about that. Instead of hard-coding things, I’ll likely make it possible to swap the stack allocator completely, because for the embedded world, the VM approach is obviously not usable. And even in some other situations on 64-bit platforms, it might be beneficial to use regular std.mem.Allocator to allocate the stacks. That’s how I’m thinking about the microcontroller impl, where it’s most likely going to be a build-time option to allow for X stacks, even each task having manually assigned stack (similarly to how Zig plans to deal with recursion in the future).

But I believe the the architecture of zio is already better than the planned

std.Io.Eventedin terms of design for writing server applications, so the runtime will stay.

Can you briefly say what makes it better in that respect?

This is off-topic here, so I’ll be short:

-

Timeouts everywhere, this is critical for any server in production. Because

Iotries to be an universal platform, it unfortunately takes 2 extra concurrent tasks to implement cancellation of tasks taking too long. -

Ability to wait on blocking tasks from non-blocking context. I find this extremely important, because even though I want non-blocking networking stack, you can’t avoid blocking code in practice and having an easy option to call it and wait for it makes sure you are not blocking the event loop. Once Zig 0.16 is released, I’ll express this by having two Io implementations, one for blocking I/O, one for non-blocking, and they will be able to wait on each other’s futures/mutexes/etc.

-

By having access to the underlying event loop in a cross-platform layer, that can be also used as user API when integrating existing C libraries, which I find important for the ecosystem. Many libraries like hiredis, libpq, c-ares have hooks for integrating with existing event loops. With

std.Io.Evented, each platform will use a completely different event loop, so this becomes much harder. -

This is subjective, but I believe the layered approach I use in zio allows it to be more consistent across multiple platforms, as it’s much easier to test if you have some separation between the layers.

[mods, feel free to move this to the zio thread]